A study conducted by King’s College London found that the three leading Large Language Models (LLMs), i.e., ChatGPT, Claude and Gemini, prefer nuclear signaling in 95% of simulated war game cases. This reflects the potential perils of the use of Artificial Intelligence (AI) in military operations without oversight, suggesting that the models are far from reliable for unregulated military use.

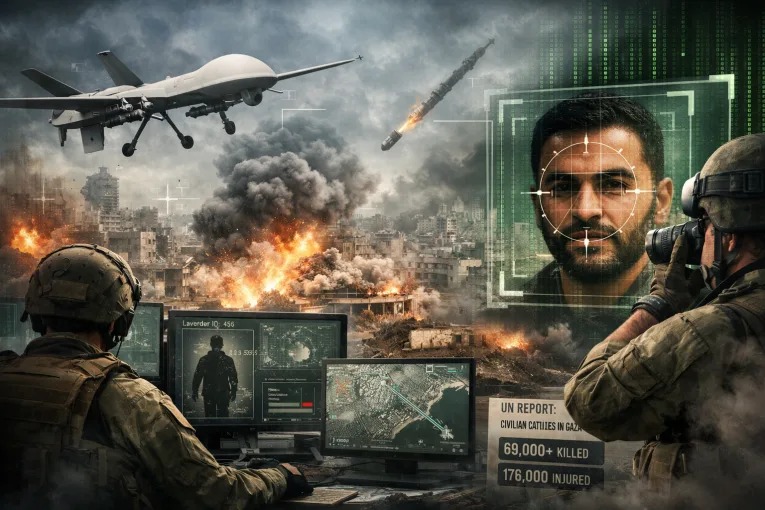

While there is no likelihood of handing over nuclear command and control to AI, it is fair to establish that the growing military applications of AI, including summarizing huge amounts of data, shortlisting targets, ranking threats, and recommending strikes, have revolutionized warfare. This has raised unprecedented concerns, mostly ethical, about AI deployment in military systems and its use during active war.

The ongoing US-Israel war with Iran exemplifies the use of multiple modern technologies and warfare strategies, including the use of AI in military operations. Within this context, the recent termination of the partnership between Anthropic and the US Department of war and the subsequent deal between OpenAI and Pentagon has raised concerns regarding the extent to which AI should be integrated in military operations.

The use of AI in classified military operations raises two main concerns, also put forward by Anthropic. The first concern revolves around mass surveillance and the privacy of personal data, while the second concern is regarding the development and deployment of fully automated weapons.

As the subject became a heated public discussion, the public’s verdict on the matter became clear, with a record 295% increase in daily average uninstall rate of ChatGPT and the rise in demand of Claude, making it a top ranked application on Apple’s App Store.

Public criticism of the OpenAI deal forced it to amend some terms of the deal to ensure that the system will not be intentionally used for surveillance of American nationals, reflecting the power of mass dissent. But this still leaves ambiguity regarding the data security of users from all over the world.

This episode of Anthropic vs. Pentagon illustrates the rising involvement of private AI firms in a state’s military operations and the ethical paradox associated with such partnerships. This has shifted the focus of the public, policymakers, and researchers all over the world towards the growing military applications of AI and the lack of ethical guidelines regulating it.

The research on prospects and challenges of AI integration in warfare, especially the development of fully autonomous systems, shows that the current models of AI are nowhere near to being capable of making autonomous decisions with no human oversight. This clearly suggests that AI should not be trusted with crucial tasks that could drastically impact the lives of millions. One of the major issues is AI hallucinations, which can have grave consequences in military operations if they go unchecked.

The major issue with mass surveillance through AI models is that it undermines the fundamental liberty of people and creates privacy concerns. These systems can be used to stalk personal accounts on social media, access cameras and facial recognition systems to track people in real time, and summarize data to recognize any suspicious patterns. The results can be biased and automatically flag individuals, potentially leading to discrimination and unfair limitations on individuals.

The proponents of AI integration in military systems stress that AI presents endless possibilities and opportunities that can assist decision makers, especially considering time constraints. Furthermore, those advocating AI use by the state for “all lawful purposes” emphasize that AI use does not require guardrails when national security is involved.

Despite the potential prospects, AI models, in their current forms, are often inaccurate and opaque; thus, their integration with military operations requires strict laws and regulations to ensure ethical use, human oversight, and an expanded understanding of the broader risks associated with it.

In the sheer absence of any national and international regulations regarding how data collected by generative AI systems and LLMs is used by foreign states and private corporations, the concerns regarding misuse of data, mass surveillance, and AI disinformation remain primary. This makes users vulnerable to adverse impacts of data extraction, raising the concern that AI can become a tool for exploitation of users.

As dealing with AI ethics in practice presents complex challenges, AI rules and principles need to be developed through collaborative efforts by states and relevant non-state actors that clearly define the limits of the military use of AI and develop red lines that should not be crossed.

Establishing globally recognized AI norms and regulations can address the concerns regarding mass surveillance by declaring it unethical and unlawful not only for American citizens but for users all over the world. Furthermore, developing a comprehensive framework to regulate AI applications in the military can mitigate the risks associated with automated warfare. Without such guidelines, the integration of AI into military systems risks transforming these powerful technologies into tools for mass surveillance and indiscriminate automated violence.